Reasons to believe

The computational systems for producing realistic visual "fakes" continue to improve. This New Yorker article by Joshua Rothman takes a long look at "synthetic realism" — using neural nets and machine learning to generate images and videos that appear as if they are recordings of reality. Drawing on patterns detected in massive image archives (in some cases compiled from social media), they can create passable representations of events that never occurred. (The image above, by Mike Tyka, captures the process of adversarial networks refining the generated faces of people who don't exist.)

Many seem to fear that AI reality generators will invalidate visual evidence altogether, allowing fakes to be made on demand that seem to prove anything, while casting doubt on "genuine" representations (if that is not already oxymoronic). The concern seems to be that people will be newly susceptible to blindly believing what they see, suddenly more vulnerable to being led to some false conclusion, as if that weren't often already the consumer's deliberate goal. Information consumption is typically no different than entertainment consumption: There is the expectation that information will be given a meaningful form, a narrative shape that will allow for suspension of disbelief or some satisfying sense of closure — this more than accuracy dictates whether an experience feels real or true. "Reality" is an emotional experience, an insider feeling of intimacy with "facts," a comfort level where you already know what they mean, what their purpose is, without having to go through some rational or logical process of persuasion.

If anything, deepfakes and the like make it harder to consume information with the level of gullibility (or "confidence," if you prefer) necessary to make the experience satisfying. Their prominence will force consumers to be more skeptical in general about the information they consume, as they help illustrate how all of what is taken to be real is in some ways "synthetic," produced. People enjoyed being tricked under conditions they control. Perhaps going on social media for "news" already works that way; it's like paying to enter the freak-show tent, only we're seduced not by a carnival barker but the approval of friends who've already been inside.

Representations of any sort are always reductive edits of the full spectrum of reality; they never simply document incontrovertibly what happened somewhere at some time. They are not passive; they produce an experience, a different one in each person who consumes the representation. That experience might be the apparent thrill of verification — the giddy sense that something we should probably doubt might be real. Or it might clarify what has eluded representation, which can then be taken as the higher truth. But there is no experience of the real that is somehow direct and unmediated. In fact, that is simply another of the fantasies potentially stoked by certain kinds of representations: They remind us of a time that never existed, when images and the people who made them could be automatically trusted. Fakes help us pretend we used to enjoy that kind of comfort; they generate synthetic nostalgia.

Drawing on conversations with computer scientists, Rothman points out that the predictive logic of synthetic media can be applied to more than just images, as when "your Facebook news feed highlights what 'people like you' want to see." This algorithmic sorting process attempts to extend a plausible version of yourself out ahead of you, so that you never stray beyond the boundaries of your established desires, your anticipated identity. The news feed is no less a synthetic universe for being made out of "actual" posts, even if those posts don't contain fake news. You can use Facebook to fill in the blank spaces of your life, much as you can use content-aware fills on Photoshop to extend patches of grass or cover over deletions in the foreground. The platform approximates your identity and turns it into an algorithmic filter that can produce reality as necessary, filling in gaps in a way that is convincing or satisfying.

Gmail's efforts to write your emails for you is another expression of the same predictive logic, another offer to extend your presence automatically (and thereby effectively excluding it). In this New York Times magazine column, John Hermann describes how this process inverts machine learning, so that the automated replies begin to train the users, who function less as creative human beings and more as adversarial "discriminators" akin to the neural nets that sift through AI-generated proposals and reject those that fail to conform to some pattern drawn from the past.

If a canned reply is never used, this is a signal that it should be purged; if it is frequently used, it will show up more often. This could, in theory, create feedback loops: common phrases becoming more common as they’re offered back to users, winning a sort of election for the best way to say “O.K.” with polite verbosity, and even training users, A.I.-like, to use them elsewhere.

Human creativity, too, in this framework is reduced to a "generator network" that is subject to the screening of an AI-powered discriminator. We write things, and system decides whether or not they seem like "realistic" thing to say, as when text is autocorrected. The past history of communication becomes the horizon for what can be said now.

Autocompletion, of course, is a form of deskilling. It outsources the work of whatever adversarial network might reside in our brain that assesses the acceptability of what we are saying before it is typed into an interface. It encourages the atrophy of that particular feat of imagination, of anticipating how a particular act might be received. Instead it encourages unreflexive action — simultaneously spontaneous and automaton-like — that can be evaluated after the fact for what it means, without any pesky intentionality interceding. That is to say, autocomplete and smart reply are means for imposing behaviorism. (As is most workplace bureaucracy, which is why the headline for Hermann's piece fatalistically suggests "You Already Email Like a Robot—WHy Not Automate It?")

Rothman similarly points out how predictive sorting of images creates feedback loops that reinforce certain representational tropes, the familiar cliches of social media images: "In addition to unearthing similarities, social media creates them. Having seen photos that look a certain way, we start taking them that way ourselves, and the regularity of these photos makes it easier for networks to synthesize pictures that look 'right' to us." We start to re-create the synthetic reality because it seems more real than the unpredictable chaos that is "actual" reality. Or rather, we see "reality" as a set of particular patterns, just as the machines do. Social media serve as a vector for spreading this way of seeing.

This is an environment in which cliches feel more real than idiosyncratic or nuanced forms of expression — cliches are more likely to appease the "discriminator" — whether that is a literal AI adversarial network or the network of social media users who are ranking what we express.

Whether things "look right" isn't a matter of accuracy; rather it is produced through certain genre conventions. We tend to live within the limits of those expectations that make us feel as though we are always getting all the reality we need — so much so that the world beyond it is unimaginable, nearly impossible to believe.

Representations, if they are meant to be taken as primarily about their own "realness," require novel ways of signaling and foregrounding that "reality" — contrived ways of framing things to connote "documentary fidelity" or "unlimited access" or "authenticity." These shift as certain techniques or markers become overfamiliar, banal to the point of invisibility.

There has long been a tendency to conflate "authenticity" with proximity, with access, with "really being there." But ubiquitous communication is eroding that idea (as McLuhan probably anticipated somewhere). In a sense, global networks operating instantaneously make it so that we are all, in a sense, really there for everything. So that being there in itself proves nothing. This changes how people emotionally experience "documentary" photography, whose revelations can no longer be counted on to inspire feelings of "real" or "intimate."

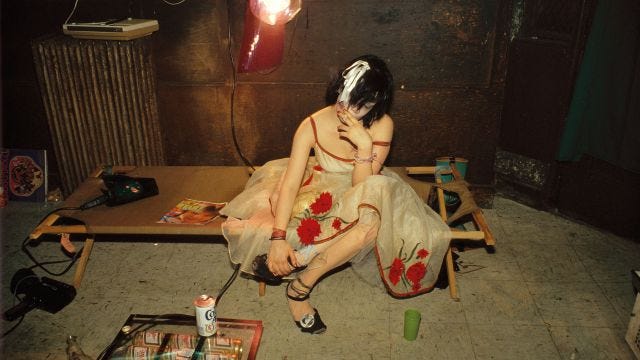

In a 1990s-era essay about photographer Nan Goldin, Liz Kotz argued that the reality effect — the intimacy and access that certain images like Goldin's may seem to grant — can be exhausted by overexposure. "When the same images are reproduced too many times, in too many places, and are liked in the same way," she argues, "this intimacy is inevitably compromised."

Naturally when I read this, I could only think of images on Instagram popping up on thousands of followers' screens, gathering in their likes. The more idiosyncratic they are, the more flattened out they become by the distribution apparatus. "If we all feel the same sentimental rush before the same image, it ceases to be poignant, and instead becomes trite, coded, formulaic ..." Kotz continues. "Few things are more repellant than a programmed sense of 'intimacy' or a regulated experience of 'accident.'"

Maybe so, but as Kotz goes on to argue herself, maybe the trite becomes the marker of the intimate, the real. The poignancy shifts away from idiosyncrasy and toward what is most liked. Posting an image to a feed or story indicates an aspiration to fit into a kind of media programming — you want the image to be chosen by an algorithm; you want its intimacy conveyed not by its content but by the enforced ephemerality of a story. When images are posted to social media, they all want to be liked in the same way, by definition. But that is not to say that users on the platforms don't experience these images as intimate — instead, the connotation of "intimacy" is detached from the conceit of special access, the sensationalized insider's look into a milieu that Goldin's work promised.

Images now may have to signal the opposite to be felt as intimate: Rather than try for uniqueness (and sadly fall into triteness), they can begin as trite and formulaic in their form and content and thereby testify to the significance of the particular unique person who has instantiated the cliche. Such images accomplish their purpose not by being more original or spontaneous or authentic — those attitudes are more cliched than the cliched poses themselves. Instead the images are "relatable" — open to vicarious consumption by virtue of their predictability, which at the same time makes who posted it more important than what it specifically represents. When a million people post sunset pictures, each of those posters is one in a million.

In critiquing one of Goldin's subjects' eagerness to be photographed and displayed, Kotz writes, "it's as if, in our current lives of fragile identity and purely privatized experience of social power (I can't change the world, but I can change my hair color), our very existence as subjects must be constantly confirmed by the gaze of others." Not sure why it "must" be confirmed; maybe we just want as much social confirmation as our social sphere can provide. Media have developed precisely to expand the ability to pursue such confirmation (and devalue it). Rather than rely only on "the gaze of a lover or intimate" for recognition and for authentication, we have broader networks.

This authentication may depend not on our revealing specific verifiable personal truths but on a different kind of sharing. Kotz references a mundane skyline photo by Mark Morrisroe, arguing that its banal familiarity offers viewers a different way in than Goldin's subcultural documentary style affords. "It's that very ordinariness that makes it work: that the image could have been anyone's, that you might have taken that image if you'd been there then, feeling like that ... Morrisroe's work allows viewers to project themselves and their own pasts into the image while also insisting on its specificity as a document of his life, not ours." Jennifer Doyle cites this passage in Sex Objects, arguing that Katz sets up a contrast between Goldin's "almost anthropological attempt to get at the 'truth'" and the "figuration of 'truth' as a pose." Another way of figuring that contrast is between the idea that truth is uncovered or revealed, and the idea that truth is built through a collective buy-in to something that's been made accessible and obvious. Generic images allow for a collective buy-in, but each viewer projects their own individualism. But none of that experience of individuality pivots on uniqueness or originality.

This seems to me a good way to understand the "authenticity" of formulaic influencer content (and formulaic advertising more generally). It is "authentic" because it invites vicarious participation and excludes any details idiosyncratic enough to inhibit identification and aspiration. That sort of authenticity feels as though it is leaking out of marketing contexts and underwriting identity construction more generally. Kotz notes the "self-conscious self-fashioning" of the photographers she discusses, arguing that "Morrisroe's works continually reveal how subjectivity itself is propped up on an amalgam of desired images: ideal images which we may strive towards, yet to which we feel perpetually inadequate." She quotes the artist Jack Pierson, to whom she attributes a similar aesthetic:

My work has the ability to be a specific reference and also an available one. It can become part of someone else's story because it's oblique and kind of empty stylistically ... By presenting certain language clues in my work, people will write the rest of the story, because there's a collective knowledge of cliches and stereotypes that operates."

Autocomplete and smart replies works by a similar logic, codifying the "collective knowledge of cliches and stereotypes" and making their operation more efficient. They automate our participation in the collective at the level of shared phrases, common ways of expressing ourselves and depicting our reality. The automated function, or the neural net behind it, recognizes us; it sees that we belong to the community and shows us how to best express that belonging. It obviates the need for our having to recognize each other directly. Community can become another content-aware fill, automatically populated with familiar expressions of approval. These are not only social media metrics, amplified by algorithmic sorting that places the content most likely to be liked in front of likable people, but also our autocompleted thoughts, and a secure sense of having been told the right things to say and when to say them. Then we can be confident we have spoken the truth.