Someone has hung the mirror here

With most dystopian lines of tech critique, the case can be made that they give too much credit to industry claims about AI, or virtual reality, or the metaverse, or whatever concept venture capitalists are trying to push. It's generally a good idea not to take a tech company's stated ambitions at face value or as inevitably coming to fruition, though I often fall into this trap myself. What tech companies claim to want to achieve is usually damning enough, but sometimes playing it out on their own terms can seem to reveal how much more warped their aspirations are and how much more damage they would have to do to make their ambitions realizable. I tend to be skeptical that technology developed under such auspices can ever be redeemed.

In a recent Harper's essay, Joe Bernstein argues that when critics claim that tech platforms have a vast capacity for spreading targeted disinformation and effectively brainwashing users, they are symbiotically reinforcing the message that the platforms themselves are trying to sell to advertisers. "What could be more appealing to an advertiser, after all," Bernstein asks, "than a machine that can persuade anyone of anything?" His point is that tech platforms ultimately benefit from accusations that they are spreading efficacious lies and warping public opinion; it supports the idea that their data collection and behaviorist targeting techniques work and that marketers can harness them for their own purposes. "Big social-media platforms shared a foundational premise with their strongest critics in the disinformation field: that platforms have a unique power to influence users, in profound and measurable ways," Bernstein writes. When critics raise alarms about how social media platforms purportedly cause people to believe absurdities, they are doing the tech companies' own marketing for them.

As Bernstein points out, advertising, more than anything else, has to advertise its own ability to irresistibly persuade — he quotes Hannah Arendt's dictum that "the psychological premise of human manipulability has become one of the chief wares that are sold on the market of common and learned opinion." Out of context, that line seems to overstate our capacity for autonomy — humans are manipulatable, after all, and making individuals fully responsible personally for any bad decisions they end up making would ignore how the available choices themselves were socially conditioned. It's not that people can't be persuaded of things, it's just that it is virtually impossible to break down what exactly persuaded them to take which particular actions. The advertising industry's most important fiction is that it can provide a framework that sorts that out, that can trace flows of attention and find the correlations that should be understood as causation. Bernstein cites Tim Hwang's Subprime Attention Crisis, which argues that digital advertising promises more transparency but delivers more opacity, mainly to disguise how ineffective targeted ads are.

But it may be that focusing on how persuasive targeted ads actually are is also to miss the point. Ad targeting is a great alibi for the many layers of surveillance deployed in its service.The ultimate purpose of that surveillance is not persuasion but more direct forms of control — what Oscar H. Gandy Jr. described in a prescient 1993 book as the "panoptic sort." From this point of view, what looks like "targeting" is better described as audience segmentation. The target is not seen as something predefined, but as something that is refined and processed — a demographic constituted through being targeted. Surveillance functions as "a difference machine" that allows companies to profit from discrimination and other forms of exclusion and predatory inclusion.

As Gandy defines it, "the panoptic sort operates to increase the precision with which individuals are classified according to their perceived value in the marketplace and their susceptibility to particular appeals." That sounds a bit like the sort of claim that Bernstein is arguing is oversold and plays into the hands of "Big Disinfo." But Gandy's claim is that this kind of sorting presents people with limited and circumscribed choices, which in turn reduces their ability to seek out and process information on socially shared terms. It doesn't eliminate the individual's agency so much as obscure the way it is being curtailed and redirect its operation toward futile or prescripted avenues.

On this view, advertising works not via individual ads persuading you to buy a certain product but en masse as a means of invalidating the idea of public sphere (a collective way of vetting and disseminating information for a common good) and degrading our information-processing skills. Through the algorithmic pre-sorting of who sees what information, Gandy argues,

the communications competence — that Habermas suggests is required before the ideal speech situation can emerge — will be systematically denied to individuals and groups. Although communication may take place within more narrowly defined sociocultural communities, the ability of people to engage in communication across these lines will be lost as segmentation moves apace.

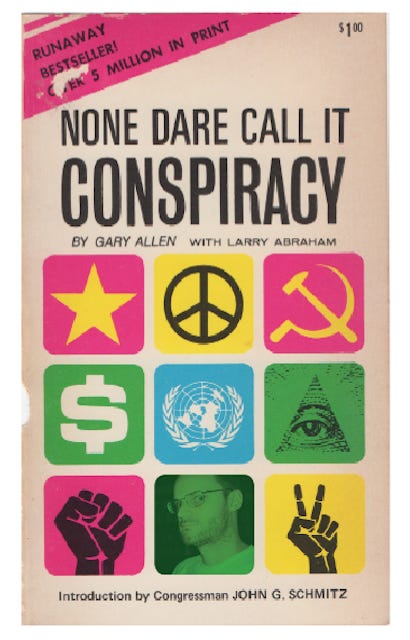

It actually turned out worse than that, since broadcast social media lets people shout at each other across those lines and harass each other relentlessly, with neither side comprehending the other. Targeted ads testify to the idea that we are always already divided and subdivided as a people, that nothing can be trusted, and that you are on your own to "do your own research" about what is true. No one is more susceptible to ad hoc manipulation than a nihilist.