The shape of the grape and the taste of the wine

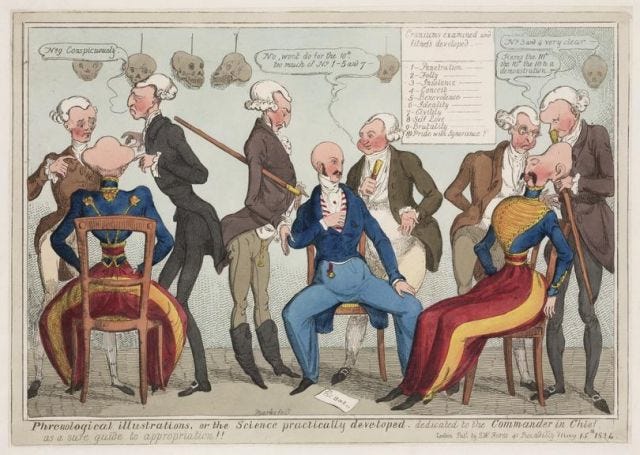

One of the fundamental critiques of AI in its current iteration — that is, the process of using massive data sets to train machine learning systems to make probabilistic classifications, often about human behavior — is that it is simply phrenology by another name. For instance, in Atlas of AI, Kate Crawford compares "emotion recognition" technology (in quotes because the technology does no such thing) to phrenology (deducing character from the shape of the skull) and physiognomy (deducing character from the shape of the face). What lots of AI applications purport to do is deduce character from the shape of data points.

All of these processes depend on the assumption that anything that can be measured externally about a person (anything that can be datafied) indicates something definitive about their inner nature. More precisely, they presuppose an "inner nature" in order to reduce it to something that can be measured externally. Then the external measurements can be used to ascribe a static inner nature to people, or resolve doubts about what they "really" feel, as if this were detachable from what they consciously experience and express. As Crawford writes about emotion detection:

It returns us to the same problem we have seen repeated: the desire to oversimplify what is stubbornly complex so that it can be easily computed, and packaged for the market. AI systems are seeking to extract the mutable, private, divergent experiences of our corporeal selves, but the result is a cartoon sketch that cannot capture the nuances of emotional experience in the world.

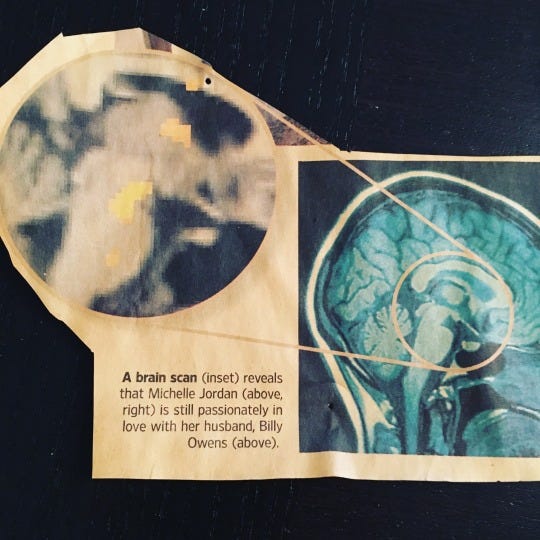

My favorite example of this is brain-scan technology:

To cite a brain scan image as proof of Michelle's "passionate love" for Billy, you have to at some point define what "love" is and explain how it can be different from what Michelle says it is. Eventually you will need to silence Michelle or render her view on the matter to be obsolete or mendacious. Such is the ideological project of all AI classifiers, which try to offer a rationale for believing that correlations in (inherently limited and ultimately nonrepresentative) data sets should be taken as understanding and explaining individuals better than they can explain themselves.

It follows that these machine-derived understandings can be described as discovery tools, means of teaching people about themselves or recommending to them what they already are but somehow didn't know. Obviously, this whole pretense offers an elaborate alibi for efforts to change or manipulate people: We didn't lie to you; you were lying to yourself, and we just showed you what you really want. This brain scan proves it. The empirical odor of these claims presumably makes them more persuasive, makes people more willing to surrender to them. It's nice to have an excuse (the algorIthm made me do it); it's nice to be saved the effort of constructing or developing a desire — and all the baggage and social implications of that work — and just have an algorithm impute one to you that you can still claim as really your own.

But we may also enjoy the way this process implicitly synthesizes all this data collected from countless numbers of other people to say something special about ourselves as individuals. It condenses the social processes that make us up and makes them disappear into a tautology, such that my brain scan says who I am, and who I am dictates the significance of a brain scan (and not all the social interactions that underwrote all the data that has ever been collected and made any analyses possible). "Data isn't collected solely because of what it reveals about us as individuals," Salomé Viljoen notes in a recent article for Logic magazine. "Rather, data is valuable primarily because of how it can be aggregated and proceed to reveal things (and inform actions) about groups of people. Datafication, in other words, is a social process."

Yet thanks in large part to how our data is reflected back at us, we tend to experience datafication as individuating — an Althusserian "Hey you" that singles us out as a unique subject. All datafication is surveillance, of course, which means, as Viljoen (drawing on Foucault) explains, that it participates in an effort to render people predictable, or to subjectivate them so that their ostensibly "free" behavior is always circumscribed by horizons they don't recognize. Hence algorithmic feeds, recommendation systems and AI generally should be understood as completing a circuit of surveillance; it converts the trove of data that's been collected into behavioral change — into narrowed-down, normalized, predictable people.

But datafication goes a step beyond raw panoptic surveillance in that it posits the terms by which people are to be made commensurate and feeds those back into social practice. In other words, it suggests that the terms of sociality take the form of these data categories, which become how we are legible, how we are particular, how we are individualizable. At the same time this process obfuscates how our individuality depends on sociality, making data seem like "personal data."

As I argued here (and probably 37 other places), data surveillance capitalizes on our desire to have our "real selves" captured behind our backs and revealed to us; this rationalizes surveillance's "inevitability" and makes it a prerequisite to self-knowledge. One of the seductive things about surveillance is that you know you are making an impression — as so much data —without having to try to make an impression (and risk being "cheugy"). You can trick yourself into thinking that the effort to be natural has become superfluous, and the “naturalness” will be constructed for you from that data for your later consumption. Surveillance lets some users chart a path to “being natural” without immediately feeling unnatural and self-conscious about how they tried to plan their spontaneous self. So when I want to feel “authentic,” I can look at a list of books Amazon recommends for me and simultaneously delight in how well my data pegs me and in how much of me escapes Amazon’s understanding.

In The Psychic Life of Power Judith Butler analyzes this conjunction of subjectivity and subjection — how a "subject is formed in subordination," which makes us complicit, attached to a degree to our subjection and dependent on those forces of 'regulatory power" (like algorithms, for instance) that posit who we are. Butler claims we extract tangible experiential benefits along with the ideological indoctrination. "A critical analysis of subjection," Butler argues, "involves:

(1) an account of the way regulatory power maintains subjects in subordination by producing and exploiting the demand for continuity, visibility, and place;

(2) recognition that the subject produced as continuous, visible, and located is nevertheless haunted by an inassimilable remainder, a melancholia that marks the limits of subjectivation;

(3) an account of the iterability of the subject that shows how agency may well consist in opposing and transforming the social terms by which it is spawned.

The "inassimilable remainder" — the part of the self that rejects how it is being subjectivated — becomes visible in how recommendation systems fail, but it may only mask their larger successes in subjectivating us. The failures foreground the fantasy that we are still somehow escaping the system that is structuring our sense of our elusiveness.

Similarly, the degree to which we want to be seen as individuals or believe ourselves to be unique individuals without any dependence on society for our traits and proclivities can in turn be used against us this way: It contributes to making AI's deductions and reductions of the "inner" to the "outer" seem acceptable, insofar as they purport to say something about us as individuals and not as dependent on a society for our sense of self-understanding. That is to say, phrenology is a tempting form of self-knowledge precisely because it abstracts individuals from how they are situated in society and how that conditions who we are.

The critique of phrenology is as old as phrenology itself. Hegel has a long section about it in Phenomenology of Spirit, something that seemed to puzzle some 20th century commentators but which makes perfect sense now, with AI making the temptation of phrenology unfortunately relevant again. According to Terry Pinkard, in Hegel's Phenomenology: The Sociality of Reason, Hegel "takes up various pseudo-sciences of his time ... to show how these could not be sciences and thus how the attempt to construct a 'science of self-identity' would be a false start" — a false start that has started yet again with the attempts to predict personality from data. Pinkard goes on to explain Hegel's idea of what sort of self-understanding is tenable, and why it rules out pseudosciences not on the grounds of their inaccuracy but their incompatibility with social life:

Individual self-consciousness is one's taking oneself to be located in a determinate "social space"; an individual's self-identity is made up of his actions in that "social space" and how those actions are taken by others. The "social space" is both the basis of the principles on which actions are taken and the basis of the interpretations of those actions by others. Self-identity cannot be something determinate and "fixed" that an individual could have outside of acting in any determinate "social space."

The pseudosciences of self-identity however, see it as exactly that: as something that is completely formed and is then expressed in actions. For these pseudo sciences, self-identity (or "character") is taken by them as something formed, fixed, and inner, whereas its expressions are taken as something that is outer, something available for observation. The pseudosciences of self-identity thus hope to find the laws that correlate the ways in which "inner character" is necessarily expressed in outer observable behavior.

Of course, that is also what various AI approaches to prediction try to do. Behind the various pseudoscientific proposals, Pinkard writes, is "the assumption that a person's character is something fixed and indifferent to its social expression such that it would be what it is without its being expressed in any actions at all."

In other words, pseudosciences (and AI) are part of a delusional or ideological effort to conceive of individuals as having a "self" that doesn't derive from sociality — that you can be "who you really are" independent of what socialization makes you, and that you imagine that you can unilaterally "express yourself" without that self being changed in the process of participating in society. Predictive AI participates in that delusion, insisting on selves basically made up of lossy data, information with much of the social context subtracted. This is also why "personal data" can be an extremely misleading phrase when applied to behavioral data: None of it pertains to a single person but to irreducibly complex social contexts.

Algorithmic prediction systems may replace "social space" to a degree, in part because they are imposed on people, in part because we choose them as a convenient expedient that lets us seem to escape or rise above sociality. This leads to the scenario Pinkard describes, in which one has a "character" without it having to be expressed in social contexts; instead it is reflected back to one in the form of "recommended" content. Character ceases to be action but becomes pure consumption.

Letting AI parse our behavior — and believing in its fantasy of a preformed, static inner self — may allow us to appear to evade the vulnerability of having our behavior parsed and determined by other people or by social institutions. But AI is just a proxy for those judgments, situated in a technological apparatus that separates those judgments from accountability and reciprocity. "Having a self" thereby is experienced as antisocial rather than necessarily social.

This is how Pinkard explains that necessary sociality and debunks fantasies like "emotion detection" in the process:

The nature of action is such that its expression in various actions (such as a grimace) is a matter of interpretation by both the agent himself and by others in light of certain social norms. A person's character is inseparable from what he does, and what he does is a matter of interpretation. Character cannot be a "thing" that exists independently of its expressions in various actions. Even what might look like a prime candidate for such "inner" things — namely, one's feelings — are themselves subject to interpretation; one must interpret one's feelings in order to know what one is feeling ... In fact, there is no incontrovertible knowledge of character available either through introspection to the agent himself or to the observer of the agent's face or skull. Neither the agent himself nor his observers can be in a position to say indubitably that this is "who" he is outside of any social context. Each is making an interpretation based on the norms of his time, and each interpretation is fallible.

You can't explain who a person is or what they are feeling by looking at them in isolation and treating them as inert things to be weighed and measured. Feelings are social negotiations; who we are is a matter of how we navigate ever-changing social contexts, how we change ourselves in a changing world. People are simply "what they do" as if that could be abstracted from all the other conditions that have made for a moment where they could act, or from all that goes into how that action is understood. Many of the fantasies about AI are about obviating that inconvenient condition, making us predictable not jus to the state or to advertisers but to ourselves.

In "Hegel on Faces and Skulls," Alasdair Macintyre points to what, in Hegel's view, is effaced by that fantasy of self-identity, that we can unilaterally be what we do or be what our personal data seems to disclose — namely, the possibility of "becoming."

Let me reiterate the crucial fact about self-consciousness, already brought out in Hegel's discussion of physiognomy; that is, its self-negating quality: being aware of what I am is conceptually inseparable from confronting what I am not but could become. Hence, for a self-conscious agent to have a trait is for that agent to be confronted by an indefinitely large set of possibilities of developing, modifying, or abolishing that trait. Action springs not from fixed and determinate dispositions, but from the confrontation in consciousness of what I am by what I am not.

Any mechanical prediction of who we are precisely captures what we have ceased to be. "Authenticity" is not a matter of "being true to oneself" but a matter of finding ways to move past how one appears in data or even how one appears to oneself in order to become something irreducible. Hegel, for his part, recommends (in paragraph 339) that if anyone tells you that your character is determined by the shape of your skull — if they effectively tell you, "I regard a bone as your reality," as he puts it — you should rebut them on their own terms by bashing their skull in.